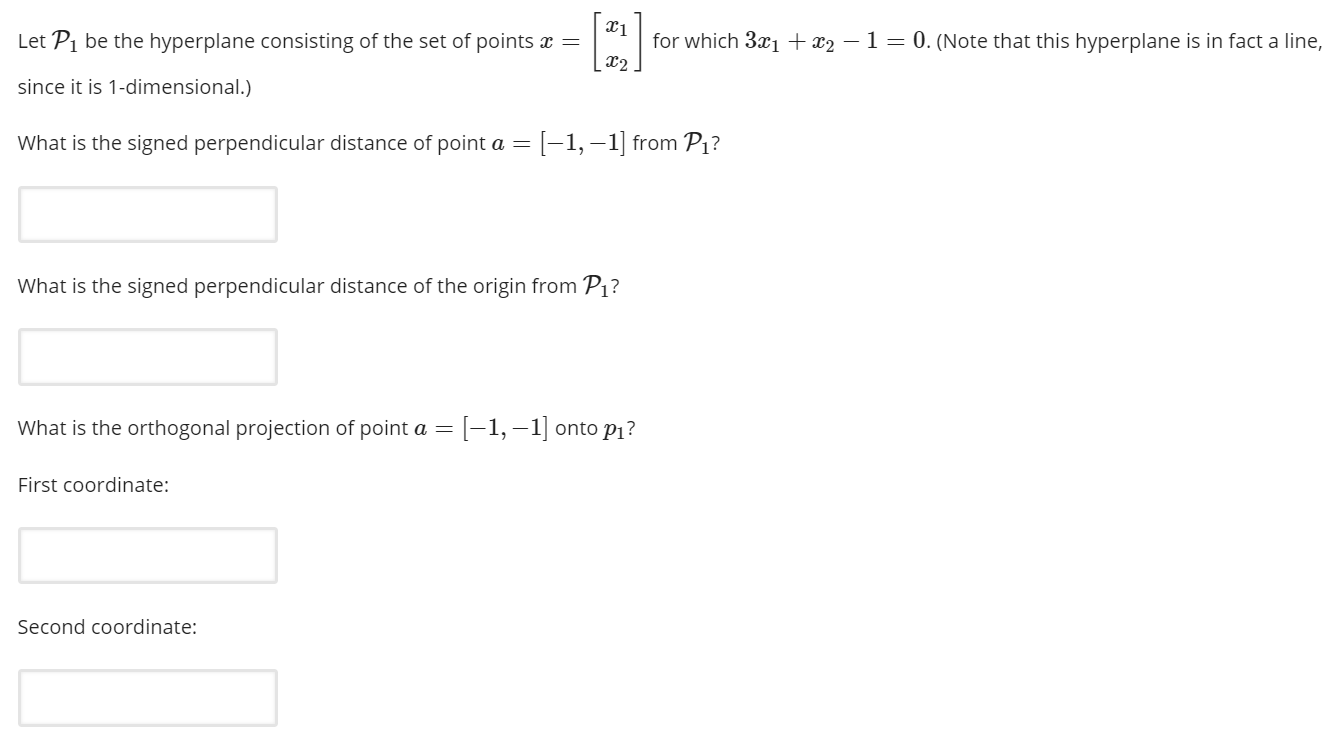

The weight vector is obtained by minimizing the sum of the two. For example in regularized least squares you have the loss function $\sum_i \|y_i - \langle w, x_i\rangle - b\|_2^2$ and the regularizer $\|w\|_2^2$. One reason is that it does not correspond to a normalizable likelihood. Understand that Gradient Descent can be used to optimize the objective function when k = 1 (Hinge Loss) by changing the inequality constraint to equality constraint.Let me first answer your question in general. We will use both of these in later tutorials. If k = 1, then the loss is named as Hinge Loss and if k =2 then its called Quadratic Loss. The value of C is chosen by Cross Validation during training time. In other words, the Hyper Parameter C controls the relative weighting between the twin goals of making margin large and ensures that most examples have functional margin at least 1. If $ C \to \infty $ then the margin does not have any effect and the objective function tries to just minimize the loss.If $ C \to 0 $, then the loss is zero and we are trying to maximize the margin.The HyperParameter C is also called as Regularization Constant. \begin^n (\xi_i)^k $ is the loss term and C is a HyperParameter which controls the tread-off between maximizing the margin and minimizing the loss. Given a training example $(X_i,y_i)$, the Functional Margin of $( \beta, b) $ w.r.t the training example will be, However later need to use geometry to re-define the equation ( known as Geometric Margin ).įunctional Margin is used to define the theoretical aspect of margin.We can conceptually define the equation of margin ( known as Functional Margin ).We will discuss about two types of margin. Use the observable dataset to find the best margin, so that the appropriate Hyperplane can be identified. Next, we will understand how we can define margin mathematically. Watch the video or post comments if you need help. The above statement is very important, make sure that you understand it. ( Remember will still use the Margin to select the optimal separating Hyperplane) The first one has much wider margin than the 2nd one, hence the first Hyperplane is more optimal than 2nd one.įinally, we can say, in Maximal Margin Classifier, in order to classify the data, we will use a separating hyperplane, which has the farthest (max) minimum (min) distance from the observations.

Let’s select two Hyperplanes, based on the margin. The size of the Margin defines the confidence of the classifier, hence the most wide margin is preferable. If you have just completed Logistic Regression or want to brush up your knowledge on SVM then this tutorial will help you. We will go through concepts, mathematical derivations then code everything in python without using any SVM library. This Support Vector Machines for Beginners – Linear SVM article is the first part of the lengthy series. Hence I wanted to create a tutorial where I want to explain every intricate part of SVM in a very beginner friendly way. It took me around 2 weeks just to go through all the derivations and have a basic understanding of Support Vector Machines. I felt SVM is very hard to understand during my ML class at University, then had another struggle to find the resources which can teach me SVM from ground up.

However most of the time the learning curve is not very smooth as it’s a vast subject by itself and often most of the curriculums are trying to squeeze many topics in one course.

In academia almost every Machine Learning course has SVM as part of the curriculum since it’s very important for every ML student to learn and understand SVM. We still use it where we don’t have enough dataset to implement Artificial Neural Networks. Support Vector Machines (SVM) is a very popular machine learning algorithm for classification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed